Beyond the Scorecard: What Your Agent Performance Reports Are Actually Trying to Tell You

For many operations leaders, the monthly performance review is an exercise in high-stakes guesswork. You stare at a dashboard flashing a “93%” composite score. On the surface, it signals success — a green light for your frontline operations. But beneath that number lies a dangerous blind spot.

Does that 93% represent a consistently elite performer, or is it a mask for systemic compliance risks that simply haven’t been caught yet?

The Purpose of Performance: Why This Report Exists

The Agent Service Performance Report was designed to bridge this gap. It exists to answer a fundamental operational question: Are our service conversations consistently delivering quality, compliance, and customer confidence — and where are the risks?

Traditional QA reporting often focuses on scores alone, but scores are “what” numbers. They fail to explain the “why.” This report moves beyond the surface level to analyse:

- Quality Assurance & Compliance: Distinguishing between strong CX/weak compliance and vice versa.

- Business Process Execution: Mapping how agents handle specific call flows.

- Topic-Based Variation: Identifying whether an agent thrives in simple transactions but struggles with complexity.

- Behavioural Patterns: Separating isolated, one-off errors from repeat behavioural gaps.

In the traditional world of Quality Assurance (QA), managers are forced to play a game of statistical roulette, sampling a tiny fraction of calls. However, as defined by the “Architecting Excellence” and “CXEX” frameworks, the industry is shifting toward a more rigorous standard. It is an AI-synthesised narrative that transforms 100% of customer interactions into actionable intelligence, ensuring that coaching becomes measurable and targeted rather than reactive.

1. The Empathy-Compliance Paradox

One of the most profound insights revealed by total interaction analysis is what we call the “Empathy-Compliance Paradox.” In a manual sampling environment, an agent who is “good with people” is often shielded from scrutiny. The data, however, frequently reveals a different story.

Consider the performance profile of an agent we’ll call Adam. During the March reporting period, Adam achieved a near-perfect 97% in Customer Experience (CX). He is a master of the “soft skill,” lauded for his professionalism and warm tone. Yet, his Compliance QA score sits at a troubling 78%, marking a 4% decline from the previous month.

This disparity highlights a critical operational truth: being “nice” does not mean being “correct.” As noted in the report’s summary:

“Adam demonstrates strong overall performance, consistently excelling in professionalism and empathy. However, there are recurring issues with caller verification and summarisation of next steps, which occasionally impact compliance and call closure quality.”

When an agent prioritises rapport over protocol, they protect the brand’s reputation in the short term while exposing the organisation to significant regulatory risk.

2. Performance Isn’t Static — It’s a Stress Test

We often view agent skill as a fixed attribute, but the data suggests otherwise. Performance is situational, dictated by cognitive load and emotional complexity.

The data shows that agents like Adam excel in routine tasks such as Billing & Payments. In these low-stress environments, procedural adherence is high. However, when the emotional stakes rise — such as in Claims or Cancellations — metrics often fracture.

These “Low Scoring Topics” act as a stress test. The emotional labour of managing a frustrated customer often overrides procedural memory. Identifying these nuances allows for surgical intervention rather than blanket retraining.

3. The Death of Manual Sampling

The shift toward AI-driven insights marks the definitive end of the manual sampling era. Traditional QA is a liability because it treats errors as isolated incidents. When you only hear 2% of calls, you cannot distinguish between a “bad day” and a “bad habit.”

By synthesising hundreds of interactions, AI-driven reporting provides a view of organisational health that was previously impossible. It enables leadership to differentiate between an isolated error and a repeat behavioural gap — moving from reactive damage control to evidence-based protection of the brand.

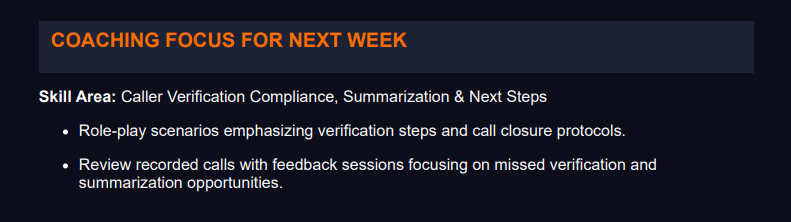

4. Coaching by Data, Not by Hunch

Without structured insight, coaching is merely reactive — a series of anecdotal corrections based on the “call of the day.” The “Architecting Excellence” framework suggests a more disciplined approach:

“Without structured insight, coaching becomes reactive. With it, improvement becomes measurable and targeted.”

The modern performance report provides a specific roadmap. Rather than a vague directive to “be more compliant,” the report identifies the exact failure point: Caller Verification Compliance. For Adam, the resolution is specific — implementing a standardised checklist for complex topics. This transforms the coaching session into a collaborative strategy session.

5. Tracking the “Closing” Comeback

The final takeaway is the ability to track the trajectory of growth. In a legacy system, an agent below the team average is a “problem.” In a narrative-driven system, we look for the comeback.

Take Adam’s Closing Techniques. Last month, his score was a dismal 30%. In the current period, it jumped to 60%. While still below the team average, the 100% improvement is the real story. This trend data validates that previous coaching was effective, allowing leaders to reward progress and double down on what is working.

Snapshot: Adam’s Performance at a Glance

| Metric | Score | Signal |

|---|---|---|

| Customer Experience (CX) | 97% | Strong soft skills, high empathy |

| Compliance QA | 78% (↓ 4%) | Verification & closure gaps |

| Billing & Payments Topic | High | Procedural adherence solid |

| Claims / Cancellations Topics | Low | Emotional load breaks process |

| Closing Techniques | 30% → 60% | 100% MoM improvement |

Conclusion: From Raw Data to Actionable Insights

The future of service excellence does not lie in the accumulation of more data, but in the synthesis of that data into a coherent narrative. The goal is to transform conversations into action — moving away from box-checking exercises toward true operational intelligence.

As you review your next set of performance metrics, ask yourself: Is your current QA process actually developing your people, or are you just gambling on a 93%?

The answer is hidden in the 98% of calls you aren’t listening to.

- Agent Performance

- AI in Finance

- AutoInsights

- Business Intelligence

- Call Centre Productivity

- Compliance Monitoring

- Contact Centre Management

- Conversational AI

- Customer Experience

- Customer Success

- CX Strategy

- CXEX

- Data Analytics

- Digital Transformation

- Employee Development

- Operational Excellence

- Performance Coaching

- Quality Assurance

- ROI of AI

- Service Excellence